Explained: What is Cerebras and why is everyone suddenly talking about it

AI chip upstart’s blockbuster IPO, wafer-scale ‘superchip’ design and marquee partners like OpenAI and Amazon are fueling talk of a serious new rival in the AI infrastructure race

AI chip startup Cerebras Systems has become one of the hottest names in tech after investor demand for its IPO surged dramatically. The company reportedly increased both the size and pricing of its public offering after orders exceeded available shares by more than 20 times. At the upper end, the IPO could raise nearly $4.8 billion, making it among the largest listings of 2026 so far.

The excitement comes from one central narrative--Cerebras is being positioned as a potential challenger to NVIDIA Corporation in the AI infrastructure race.

Unlike most chip startups, Cerebras is not trying to build slightly cheaper or slightly faster GPUs, as it intends to design a different architecture for AI computing. That positioning has also attracted major partners including OpenAI and Amazon.

What exactly is Cerebras?

Cerebras is an American AI semiconductor company headquartered in Sunnyvale, California. It was founded in 2015 by Andrew Feldman, Gary Lauterbach, Michael James, Sean Lie and Jean-Philippe Fricker. The company is led by co-founder and CEO Andrew Feldman.

The company’s core goal is relatively straightforward:

Build AI systems that can process massive AI models faster and more efficiently than traditional GPU clusters.

That mission matters because the AI industry is running into a scaling problem. Training and running models like GPT, Claude or Llama increasingly requires enormous GPU clusters, huge energy consumption, and expensive networking infrastructure.

Recommended Stories

Cerebras believes current AI computing systems are becoming too dependent on linking thousands of GPUs together. Instead, it argues AI workloads should run on much larger chips with fewer communication bottlenecks.

What is Cerebras’ chip and why is it unusual?

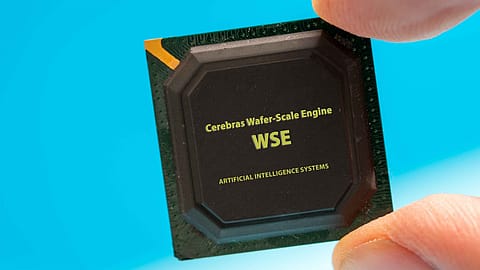

Cerebras is best known for its “Wafer-Scale Engine” (WSE), currently in its third generation called the WSE-3.

Normally, semiconductor companies cut a silicon wafer into many smaller chips. Cerebras instead uses almost the entire wafer as one giant processor. The company says this allows it to pack trillions of transistors and hundreds of thousands of AI cores onto a single chip.

The company claims this design reduces the amount of time AI systems spend moving data between processors, which is one of the biggest bottlenecks in AI computing.

(INR CR)

To put it simply, Nvidia builds AI systems by connecting thousands of powerful GPUs together, whereas Cerebras tries to build one enormous “superchip” that can handle more of the work internally.

How is Cerebras different from Nvidia?

Nvidia dominates AI computing through GPU clusters. Companies scale AI workloads by connecting many GPUs together using networking technologies like NVLink. This model works extremely well and powers much of today’s AI boom. But as AI models grow larger, GPUs spend increasing amounts of time communicating with each other across servers.

On the other hand, Cerebras argues that too much AI computing power is being wasted on communication overhead. Its wafer-scale architecture tries to keep more computing on one giant processor, reducing the need for constant data transfers between chips.

Nvidia’s memory is attached to each GPU, but Cerebras separates memory and compute, which it says helps support larger AI models more efficiently.

Why is inference suddenly so important?

Inference is quickly becoming the next major battleground in AI infrastructure. According to a Google blog, AI inference refers to “the "doing" part of artificial intelligence. It's the moment a trained model stops learning and starts working, turning its knowledge into real-world results.”

As AI adoption scales globally, inference costs are exploding. This is where Cerebras is trying to differentiate itself by claiming that its systems can generate AI responses significantly faster than traditional GPU-based systems in certain workloads.

That focus has attracted companies looking for alternatives to Nvidia infrastructure.

What is OpenAI’s connection with Cerebras?

OpenAI is both strategically and commercially important to Cerebras.

Reuters reported that OpenAI agreed to purchase up to 750 megawatts of computing power from Cerebras over three years in a deal reportedly worth more than $10 billion. Another report later suggested the arrangement could exceed $20 billion and may include an equity stake.

This matters because OpenAI has been actively exploring alternatives to Nvidia as AI demand surges.

What about Amazon and AWS?

Amazon, through Amazon Web Services, has also partnered with Cerebras.

Reports suggest that AWS plans to offer Cerebras chips through its cloud infrastructure, specifically for AI inference workloads.

The partnership is notable because Amazon already develops its own AI chips through Trainium and Inferentia. Yet AWS is still working with Cerebras, suggesting cloud providers increasingly want multiple AI hardware options rather than depending entirely on Nvidia.

Is Cerebras actually a threat to Nvidia?

Not immediately, as Nvidia still dominates the AI accelerator market through software dominance, ecosystem lock-in, hyperscaler relationships, and massive manufacturing scale.

Cerebras remains much smaller. But the company has become important because it represents a different way of thinking about AI computing at a time when the industry is actively searching for alternatives to Nvidia’s GPU-heavy infrastructure.